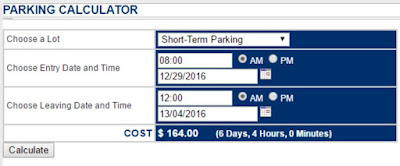

Let's take some requirements for a calculator that works out how much it will cost to park at an airport carpark:

Parking Calculator Application

I've written some test cases for each of these requirements. I've written these test cases in good faith following what proponents of test cases consider to be good practice. Each test case is written as unambiguously as possible; each step has an expected result with clear pass/fail criteria:

Test Cases for Parking Calculator

These test cases, as written, will pass (for a slightly more complex observation of what will happen when these test cases are executed, see this article, but in theory, and in intention, they pass).

As shown above, I've numbered the requirements, and you will see that there's at least one test case for each requirement. Here is a traceability matrix:

But wait

What about this?...or these?

A famous game we play in the context-driven testing community is to try and have the calculator calculate the largest cost we can.

The point is though, is that there are problems with the calculator. Problems that people who matter might be interested in. Problems invisible and masked by a seductively green traceability matrix.

Even worse, many test management tools automatically generate the traceablity matrix as a primary story-telling tool, creating bewitching tables with hypnotising statistics, with no warnings.

You might look at my test cases and, even though I wrote them with honesty and good faith, find flaws in them. Surprise surprise, just like real life. But unlike real life, I'm not giving you a traceability matrix with no context.

Traceability Matrices are like the sirens of mythology: Enchanting, but very dangerous. Never take a traceability matrix at face value.

Nice post Aaron. As much as I dislike almost all testing metrics I do have one use for traceability matrices. Not enough for me to suggest that people use them unless they need to because they are in a regulated environment - but a use nonetheless.

ReplyDeleteIf I have completed my tested (scripted or exploratory) and I have spent the time to fill in the traceability fields. I might get useful information: if I am expecting 100% coverage and I get anything less. In other words, the requirements traceability coverage (or code coverage) metric can tell me if I have completely missed something. It cannot tell me that I have adequate coverage for any requirement but it can tell me I have no coverage.

Once the 100% value is reached then the usefulness drops to zero and it is replaced with a dangerous false sense of security.

Yes! A traceability matrix is merely a signpost towards other information; it gives no information itself apart from "someone at some point has linked one model to another another model". It can raise interesting questions, and be used to direct inquiry, but it's not a source of truth unto itself.

DeleteThis comment has been removed by a blog administrator.

ReplyDeleteI personally like your post, you have shared good article. Research papers writing service It will help me in great deal.

ReplyDeleteMy friend recommended this blog and he was totally right Android Application Development Service keep up the good work

ReplyDeleteIn the event that you have faith in others and give them a positive notoriety to maintain, you can enable them to wind up noticeably superior to anything they to think they are, Helpful links for Homework We need to welcome that dealing with the outcomes of conduct is the most ideal approach to transform it. I'm unpleasant at posting routinely; I don't merit the blog achievement! be that as it may, i saw your blog, its truly astonishing, i like it,

ReplyDeleteIts a stunning post, I truly like it, Essay Experts For everything you do, for your identity, I will be everlastingly appreciative you, Words can't express my emotions, nor my much obliged for all your assistance, If I see something questionable, say on a blog or a Web website, and I don't see it anyplace else, I'll simply go ideal to the source and look at it. pleasant blog, keep it up.

ReplyDeleteOn our website we provide a wide range of glasses of choice, quality and price, come please click here! !

ReplyDeletelook at our website, we provide information about favorite bands, Come please click here !! visit !!!

ReplyDeleteIt is very nice that you mention this in the article. It's good to read such articles.

ReplyDeleterandki

Thank you because you have been willing to share information with us. we will always appreciate all you have done here because I know you are very concerned with our.

ReplyDeleteSoftware Testing Services

Software Testing Services in India

Software Testing Companies in India

Software Testing Services in USA

Software Testing Companies in USA

Software Testing Companies

Software Testing Services Company

Functional and non functional testing

There is a value services provide the best internet services with the fiber cable

ReplyDeleteit provides the best services in Australia the value services are the Australian-based organization which has provided an upgraded version of the internet and it is the most beneficial and fastest internet connection.

NBN APPLICATION

I am really enjoying reading your well written articles. It looks like you spend a lot of effort and time on your blog. I have bookmarked it and I am looking forward to reading new articles. Keep up the good work. Feel free to visit my website; 야설

ReplyDeleteThis is one of the best website I have seen in a long time thank you so much, thank you for let me share this website to all my friends. Feel free to visit my website; 한국야동

ReplyDeleteGreat post! You are sharing amazing information through your blog. I found your excellent writing skills. Our team of professionals is highly experienced in providing Biochemistry assignment help service, visit today!

ReplyDeletehttps://bayanlarsitesi.com/

ReplyDeleteEminönü

Haraççı

Taşoluk

Levent

NX5

Eskişehir

ReplyDeleteDenizli

Malatya

Diyarbakır

Kocaeli

DOYFS

Diyarbakır

ReplyDeleteKırklareli

Kastamonu

Siirt

Diyarbakır

D7MJUM

Gümüşhane

ReplyDeleteKaraman

Kocaeli

Sakarya

Samsun

0KX

Eskişehir

ReplyDeleteDenizli

Malatya

Diyarbakır

Kocaeli

K3B

goruntulu show

ReplyDeleteücretli

TDEE

https://titandijital.com.tr/

ReplyDeleteçanakkale parça eşya taşıma

kırıkkale parça eşya taşıma

erzurum parça eşya taşıma

burdur parça eşya taşıma

FQİİLO

ankara parça eşya taşıma

ReplyDeletetakipçi satın al

antalya rent a car

antalya rent a car

ankara parça eşya taşıma

0FP

ankara parça eşya taşıma

ReplyDeletetakipçi satın al

antalya rent a car

antalya rent a car

ankara parça eşya taşıma

QMLAH7

43D3E

ReplyDeleteKilis Evden Eve Nakliyat

Tunceli Evden Eve Nakliyat

Yalova Evden Eve Nakliyat

Tekirdağ Evden Eve Nakliyat

Balıkesir Evden Eve Nakliyat

21670

ReplyDeleteAmasya Parça Eşya Taşıma

İstanbul Lojistik

Erzincan Şehirler Arası Nakliyat

Samsun Evden Eve Nakliyat

Isparta Parça Eşya Taşıma

Rize Lojistik

Adıyaman Şehir İçi Nakliyat

Osmaniye Şehirler Arası Nakliyat

Giresun Parça Eşya Taşıma

B9A7F

ReplyDeleteLbank Güvenilir mi

Edirne Şehir İçi Nakliyat

Karabük Parça Eşya Taşıma

Çerkezköy Motor Ustası

Aksaray Şehir İçi Nakliyat

Erzurum Lojistik

Sakarya Lojistik

Batıkent Fayans Ustası

Hatay Lojistik

4E788

ReplyDeleteGiresun Evden Eve Nakliyat

Niğde Parça Eşya Taşıma

Çerkezköy Sineklik

Aydın Şehir İçi Nakliyat

Yenimahalle Boya Ustası

Nevşehir Evden Eve Nakliyat

Edirne Şehirler Arası Nakliyat

Giresun Şehir İçi Nakliyat

Lbank Güvenilir mi

8B2A7

ReplyDeleteBolu Şehir İçi Nakliyat

Kalıcı Makyaj

Yozgat Lojistik

Flare Coin Hangi Borsada

Balıkesir Şehirler Arası Nakliyat

Düzce Şehirler Arası Nakliyat

Omlira Coin Hangi Borsada

Isparta Şehir İçi Nakliyat

Antep Evden Eve Nakliyat

8FE8F

ReplyDeleteBilecik Canlı Sohbet Bedava

elazığ canlı sohbet sitesi

ardahan rastgele canlı sohbet

Bitlis Kadınlarla Sohbet

maraş canli sohbet

Çanakkale Telefonda Sohbet

Giresun Sohbet Siteleri

bayburt canlı sohbet ücretsiz

aydın sohbet sitesi

71118

ReplyDeleteArg Coin Hangi Borsada

Twitter Trend Topic Satın Al

Kripto Para Üretme Siteleri

Bitcoin Madenciliği Nasıl Yapılır

Dlive Takipçi Satın Al

Soundcloud Takipçi Satın Al

Casper Coin Hangi Borsada

Görüntülü Sohbet Parasız

Bitcoin Kazma Siteleri

41E4B

ReplyDeleteBitcoin Oynama

Coin Nasıl Kazılır

Binance Ne Zaman Kuruldu

Threads Yeniden Paylaş Hilesi

Bitcoin Üretme Siteleri

Youtube Abone Hilesi

Aion Coin Hangi Borsada

Omlira Coin Hangi Borsada

Kripto Para Madenciliği Nasıl Yapılır

324C8

ReplyDeleteKripto Para Madenciliği Nasıl Yapılır

Lovely Coin Hangi Borsada

Kripto Para Nasıl Çıkarılır

Facebook Sayfa Beğeni Satın Al

Btcturk Borsası Güvenilir mi

Casper Coin Hangi Borsada

Binance Borsası Güvenilir mi

Binance Kimin

Binance Para Kazanma

68485

ReplyDeleteAlyattes Coin Hangi Borsada

Binance Referans Kodu

Bitcoin Nasıl Oynanır

Bonk Coin Hangi Borsada

Binance Borsası Güvenilir mi

Bitcoin Hesap Açma

Madencilik Nedir

Tiktok İzlenme Hilesi

Coin Madenciliği Nedir

81680

ReplyDeleteuwu lend

dcent

zkswap

raydium

shiba

pancakeswap

eigenlayer

ellipal

sushi

B0D80

ReplyDeletesafepal

phantom

chainlist

ledger desktop

shiba

dexscreener

satoshi

ellipal

poocoin

GNHJGYJN

ReplyDeleteشركة مكافحة حشرات

fgfghnbgfjhngjgkj

ReplyDeleteشركة مكافحة حشرات

شركة تنظيف سجاد بالجبيل cNrK2e6Vwi

ReplyDeleteشركة تنظيف افران XXRMlVwmM3

ReplyDeleteرقم مصلحة المجاري بالاحساء ffbshRtjXC

ReplyDeleteشركة تسليك مجاري CGwsYaCzHA

ReplyDeleteشركة تنظيف كنب بالاحساء LNDHt92hQA

ReplyDeleteشركة تنظيف بالاحساء bw4YEF8AMu

ReplyDeleteشركة عزل اسطح بالرياض bGa5aAeRon

ReplyDeleteشركة عزل مواسير المياه بالدمام JtZutoVyuW

ReplyDeleteشركة عزل خزانات المياه M6INorto6C

ReplyDeleteشركة تنظيف فلل بالقطيف

ReplyDeleteBUVEUrtDK

F7C9B5040A

ReplyDeletetakipçi satın al ucuz

21324E07D8

ReplyDeleteucuz takipçi satın al

6721934242

ReplyDeletegarantili takipçi

Viking Rise Hediye Kodu

Razer Gold Promosyon Kodu

Yalla Hediye Kodu

Türkiye Posta Kodu

War Robots Hediye Kodu

Pubg Hassasiyet Kodu

Rise Of Kingdoms Hediye Kodu

M3u Listesi

شركة نقل أثاث بجازان ZDSrZZaNq2

ReplyDeleteشركة تركيب كاميرات مراقبة بجازان nNj10UegEY

ReplyDelete